Making AI agents production-ready requires plenty of architectural clarity and operational rigor. Don't be one of the organizations that learn that too late.

How To Create an AI Agent: Key Findings

- Building a successful AI agent requires clear architectural design, defined decision boundaries and embedded governance from day one.

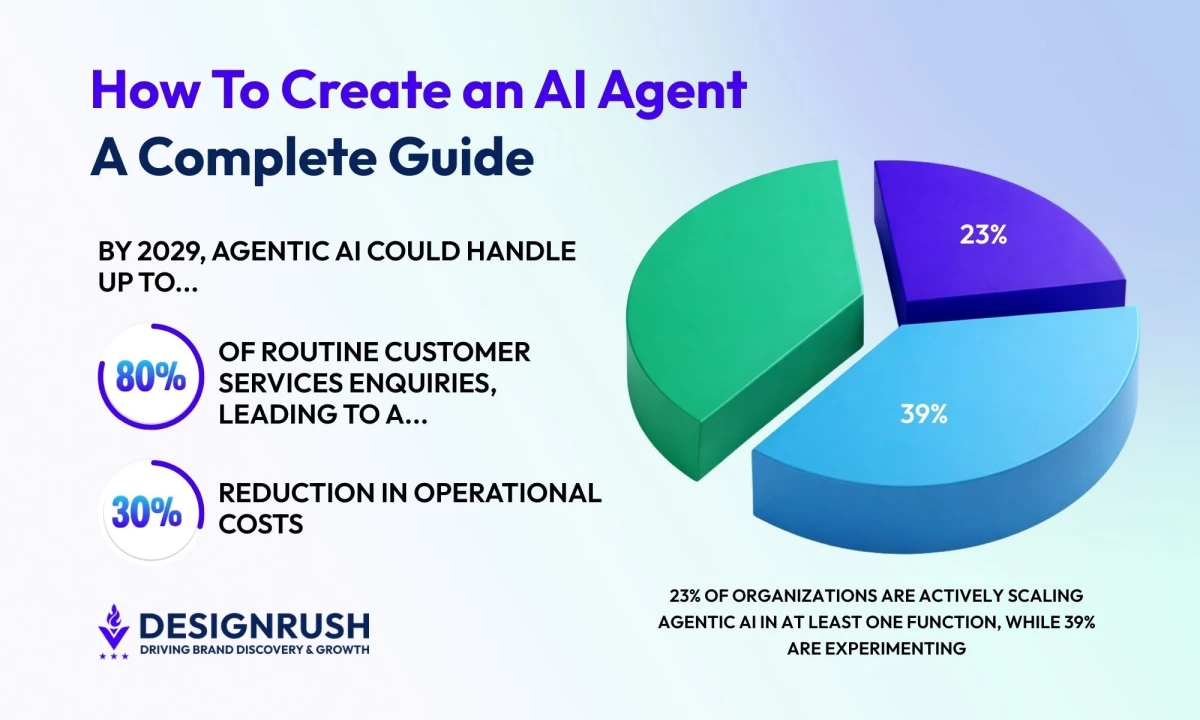

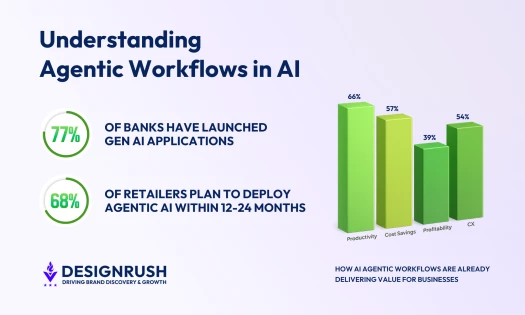

- 23% of organizations are already scaling agentic AI in at least one function, while 39% are actively experimenting, showing a rapid shift from pilot programs to production deployment.

- The highest-performing agents are anchored to specific business objectives, integrated into real systems and continuously measured against operational and financial outcomes.

What an AI Agent Is (and Isn’t)

An AI agent can plan, decide and take action within defined constraints to complete tasks autonomously. It's a goal-directed system capable of reasoning about tasks, selecting tools, and adapting based on outcomes.

More than 88% of companies are using AI in some form, up from 72% in 2024, but here’s the distinction: a chatbot reacts to prompts, a copilot assists within a workflow, and a rules-based automation script executes deterministic logic.

An AI agent, by contrast, can:

- Interpret intent

- Break a task into sub-steps

- Decide which tools to use

- Execute those tools

- Evaluate results

- Iterate if necessary

Agentic AI is moving from pilot to production as 23% of organizations report they are actively scaling an agentic AI system within at least one business function. Another 39% say they’ve begun experimenting with AI agents in their operations.

To succeed with agentic AI, you need a clear blueprint for how to structure the architecture, define decision boundaries, integrate the right tools and embed governance to move confidently from prototype to production.

The Core Architecture of an AI Agent

Under the hood, most production-grade agents include five layers:

- Reasoning engine: Usually a large language model (LLM) that interprets intent, evaluates context and determines the next best action based on defined goals and constraints.

- Tool layer: A set of APIs and system integrations that enable the agent to interact with external platforms like CRMs, databases or ticketing systems to execute real work.

- Memory layer: A combination of short-term session context and longer-term retrieval mechanisms that keep the agent grounded in relevant history, policies and structured data.

- Orchestration logic: The framework that structures how the agent sequences decisions, selects tools and determines when a task is complete.

- Governance controls: The permissions, validation rules and audit mechanisms that constrain the agent’s authority and ensure its actions remain secure and traceable.

AI solutions need more long-term investment (data infrastructure, model lifecycle maintenance, monitoring, governance) before they begin to pay off. That makes traditional automation the more cost-effective choice if the decisions it’ll be making aren’t too complex or varied.

Anchoring the Agent to a Clear Business Objective

You should always begin with a clear purpose. The most common failure mode in agent development is building one because it sounds impressive. Instead, start with a point of friction.

Where are decisions repetitive but still require judgment? Where are humans acting as routers between systems? Where do bottlenecks form because information lives in multiple tools?

An AI agent should solve a clearly bounded problem where reasoning adds measurable value. If your workflow is fully deterministic, traditional automation will outperform an agent every time (at lower cost and with higher reliability).

High-potential use cases often include:

- Support ticket triage and resolution

- Sales lead qualification and routing

- Claims processing

- Invoice reconciliation

- Internal knowledge retrieval

According to Gartner, by 2029 agentic AI systems are expected to autonomously handle up to 80% of routine customer service inquiries, a shift projected to reduce operational costs by roughly 30% as human intervention becomes the exception rather than the norm.

Choosing the Right Frameworks, Protocols and Platforms

Design decisions at this stage will determine how scalable, secure and maintainable your agent becomes. The tools you select should align with your use case, technical maturity and governance requirements.

- Select an agent framework

- Evaluate agent protocols and interoperability

- Choose the right platform environment

- Embed security and governance by design

1. Select an Agent Framework

Agent frameworks provide the scaffolding for reasoning, tool use, memory and orchestration.

Popular options include:

- LangChain: Modular framework for building LLM-powered agents with tool integrations and memory components.

- LlamaIndex: Designed for retrieval-augmented agents that connect structured and unstructured data sources.

- AutoGen: Multi-agent conversation framework suited for collaborative and autonomous task execution.

- CrewAI: Role-based multi-agent orchestration with task delegation patterns.

- Semantic Kernel: SDK for embedding AI agents into enterprise applications with strong .NET and Python support.

- Haystack: Open-source framework optimized for search, question answering and RAG pipelines.

Your framework should support tool calling, memory management, observability and governance hooks from the outset.

2. Evaluate Agent Protocols and Interoperability

@ainuggetz If you’re building AI agents, you need to know these 4 protocols. MCP, A2A, ACP, ANP each one solves a different problem in how agents connect, talk, and work together. Save this for your AI playbook. #AIagents#AgentProtocols#AIbuilders#mcp#a2a#aitools♬ original sound - Nardeep Singh

Protocols define how agents interact with tools, systems and even other agents. As ecosystems mature, interoperability becomes critical. Choose protocols that support authentication, validation and auditability.

- Model Context Protocol: Standardizes how models securely connect to external tools and data sources.

- OpenAPI Specification: Enables structured API definitions that agents can reliably interpret and call.

- JSON-RPC: Lightweight protocol for structured remote procedure calls.

- GRPC: High-performance communication protocol for distributed systems.

- FIPA Agent Communication Language: Early standard for structured communication between software agents.

3. Choose the Right Platform Environment

@benjamlns What AI agent platform should you use? 👀

♬ original sound - Ben | AI Agents

Platforms determine how your agent is hosted, monitored and scaled in production. The right environment depends on your compliance requirements, data sensitivity, latency constraints and existing cloud strategy.

- OpenAI: Provides APIs and managed infrastructure for deploying tool-using AI agents.

- Microsoft Azure: Enterprise cloud with AI services, governance controls and compliance tooling.

- Amazon Web Services: Scalable cloud infrastructure with orchestration and serverless integrations.

- Google Cloud: AI platform ecosystem with data integration and MLOps capabilities.

- IBM (watsonx): Enterprise AI platform with governance, lifecycle management and hybrid deployment options.

- Anthropic: Model provider with enterprise-focused safety and alignment controls.

4. Embed Security and Governance by Design

Security cannot be retrofitted once autonomy is introduced. Governance should be architected into the system from day one.

Here’s how to treat autonomy as an engineering discipline:

- Implement role-based access controls (RBAC) for tool usage.

- Log and audit every tool call and decision step.

- Use human-in-the-loop checkpoints for high-impact actions.

- Establish clear permission boundaries for data access.

- Continuously monitor outputs for drift, misuse or policy violations.

Designing the Agent’s Operating Model

Since an AI agent will make decisions and influence outcomes, you need an operating model that clearly defines how it behaves, when it acts, and where human authority begins and ends.

- Define how the agent thinks and acts

- Establish human oversight and escalation paths

- Build feedback loops for continuous improvement

1. Define How the Agent Thinks and Acts

Every agent needs explicit boundaries around autonomy, clearly defining what it is allowed to decide, which systems it can access, how far it can act without approval and where human judgment must take precedence.

Start by defining:

- Decision scope: What types of tasks can it complete end-to-end without intervention?

- Tool authority: Which systems can it write to versus only read from?

- Reasoning constraints: What policies, thresholds or rules must always override model judgment?

- Completion criteria: How does the agent determine when a task is done?

High-performing agents operate within clearly structured reasoning loops:

- Interpret intent

- Plan sub-steps

- Select tools

- Execute actions

- Evaluate outcomes

- Iterate or conclude

This loop should be deliberate, not emergent. If you can't explain how the agent decides, you can't govern it.

2. Establish Human Oversight and Escalation Paths

Autonomy should be proportional to risk, meaning the higher the potential financial, legal or reputational impact of a decision, the greater the need for oversight, validation and human involvement.

Low-impact decisions (like categorizing tickets) may require only logging and monitoring. High-impact actions (like issuing refunds, modifying contracts, approving claims) need escalation thresholds.

Offering advice for customer service, founder of Omnie, Jordan Brown says to “continuously monitor metrics like resolution times and customer satisfaction to address issues early, ensuring AI enhances workflows effectively.”

Define:

- Confidence thresholds: When does the agent escalate due to uncertainty?

- Exception triggers: What edge cases automatically require human review?

- Override mechanisms: How can humans intervene, pause or reverse actions?

- Accountability ownership: Who is responsible for agent outcomes?

Human-in-the-loop is not a failure mode but a control system. The goal is not to remove humans entirely, but to move them from an execution role to supervising.

As Brown adds, exceptional customer experiences will come through a collaboration between automation and human expertise. “Businesses should train their teams to work alongside AI,” he says.

3. Build Feedback Loops for Continuous Improvement

Agents improve only if their performance is measured and corrected.

Operationalize feedback at three levels:

- Output quality: Track resolution accuracy, task completion rates and downstream error frequency.

- Behavioral reliability: Monitor tool misuse, repeated retries, hallucination patterns and escalation frequency.

- Business impact: Measure cycle time reduction, cost savings, customer satisfaction and throughput gains.

Structured feedback loops may include:

- User rating mechanisms

- Periodic human audits

- Automated anomaly detection

- Prompt and policy refinement cycles

- Model retraining or fine-tuning (where applicable)

Without proper instrumentation, an agent is a black box; with it, the agent can be measured, refined and continuously improved.

Avoiding 5 Common AI Agent Mistakes

As enthusiasm around agentic AI grows, so does the number of underperforming deployments. Most failures aren’t caused by weak models. They stem from foundational gaps in the form of data that isn’t ready, integrations that aren’t robust, or governance that wasn’t designed from the start.

If you want your AI agent to scale beyond pilot mode, avoid these five common mistakes.

- Overlooking data readiness

- Underestimating tool integrations

- Failing to define context boundaries

- Skipping formal evaluation

- Treating governance and security as afterthoughts

1. Overlooking Data Readiness

AI agents are only as reliable as the data they’re grounded in. That means having structured, clean, secure and accessible data (not just large volumes of it).

Many teams discover too late that their knowledge bases are outdated, their CRM fields are inconsistent or their internal documentation lacks version control. When that happens, the agent fails subtly, producing confident but flawed outputs.

Before building the agent, assess whether your data is labeled appropriately, permissioned correctly and maintained consistently. Clean data isn’t a refinement step. It’s a prerequisite.

2. Underestimating Tool Integrations

Reasoning alone doesn’t create business value. Execution does.

An AI agent must act inside real systems, whether that’s updating a CRM record, issuing a refund through a billing platform or routing tickets in a support tool. Weak integrations lead to brittle workflows, latency issues or incomplete task execution.

Integration architecture should include structured API schemas, clear error handling and defined retry logic. If an agent cannot reliably interact with your core systems, it’ll create new bottlenecks rather than addressing them.

3. Failing to Define Context Boundaries

Agents need access to information, but not unlimited access.

Context rules determine what the agent is allowed to retrieve, under what circumstances and from which sources. Without defined boundaries, agents risk pulling irrelevant, outdated or sensitive data into decision flows.

View this post on Instagram

Well-designed agents operate within clearly scoped retrieval rules and validation checks. They don’t know everything, only what they’re authorized to know. That distinction is critical for both performance and compliance.

4. Skipping Formal Evaluation

Before scaling an agent, you should establish clear, measurable standards for success. That includes accuracy benchmarks, latency thresholds, acceptable hallucination tolerance and escalation frequency. Structured testing (including adversarial inputs and edge cases) is essential.

Without an evaluation framework, you can’t distinguish between novelty and reliability. And reliability is what determines whether stakeholders trust the system.

5. Treating Governance and Security as Afterthoughts

AI agents have meaningful authority if they’re accessing customer data, modifying records or triggering financial transactions. That makes governance non-negotiable.

Permissions must be tightly scoped. Actions should be logged. Decisions must be traceable. And escalation paths should be clearly defined.

Success with agentic AI comes from building security and auditability into the architecture from day one and avoiding a compliance retrofit.

Experienced implementation partners can make a real difference. Instinctools, for example, builds custom single- and multi-agent systems for enterprise environments and offers its GENiE™ accelerator to help teams move faster without the risks.

For teams looking to start strategically, Instinctool's AI Adoption Workshop can help identify one or two high-impact workflows, define success metrics and map a realistic implementation roadmap.

How To Create an AI Agent: Wrapping Up

AI agents might not be a shortcut to automation maturity, but they are an acceleration of it. If you define clear problems, enforce disciplined governance, build deliberately, measure relentlessly and scale only when the system proves it can earn trust, your business could reap the rewards.

Our team ranks agencies worldwide to help you find a qualified partner. Visit our Agency Directory for the top AI Companies, as well as:

- Top AI Automation Companies

- Top AI Consulting Companies

- Top AI Marketing Companies

- Top Generative AI Companies

- Top AI App Development Companies

How To Create an AI Agent FAQs

1. How long does it take to build and deploy an AI agent?

Timelines vary based on complexity, integrations and data readiness, but a focused pilot can often be developed in 6–12 weeks. Production deployment typically takes longer due to security reviews, governance controls and integration hardening.

2. Do AI agents require fine-tuning or custom model training?

Not always. Many agents can perform effectively using strong foundation models combined with retrieval-augmented generation (RAG) and structured prompts, reserving fine-tuning for highly specialized domains or performance optimization.

3. How do you measure ROI for an AI agent?

ROI should be tied to operational metrics such as cycle time reduction, cost per transaction, deflection rates, revenue lift or error reduction. The key is defining baseline performance before deployment so improvements can be clearly attributed.

4. Can AI agents work with legacy systems?

Yes, but integration maturity is a key factor. Legacy systems may require API layers, middleware or robotic process automation (RPA) bridges to enable reliable interaction, which can increase implementation effort.

5. What skills does a team need to build and manage AI agents?

Successful deployments usually require cross-functional expertise: machine learning or prompt engineering, backend integration, security and compliance oversight, and operational stakeholders who understand the business workflow being automated.